Responsible AI Use

Professional Development Training Course

Adopt Responsible AI Use ensuring ethical, compliant, and transparent adoption for sustainable, future‑ready growth. This course teaches safe practices protecting organizations. Responsible AI Use provides employees with the frameworks, safeguards, and practices required to use AI tools safely in professional settings. Participants learn how to prevent bias, protect sensitive data, validate information, and maintain transparency in all AI-assisted work.

The program includes practical risk scenarios, governance expectations, and oversight strategies that reinforce responsible behavior. Instead of restricting AI, this course empowers employees to use it confidently and correctly.

Related Consulting

Related Research

Related Projects

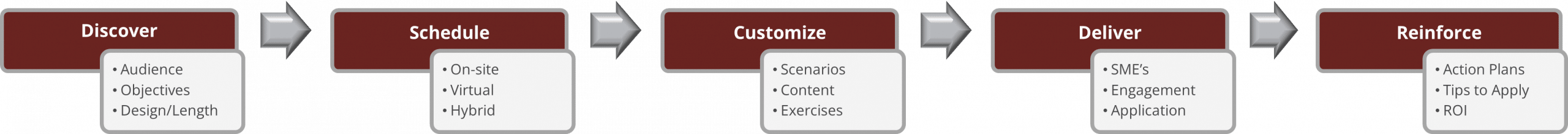

Foundational Course: Delivery & Schedule

The instructor-led course, as outlined below, is delivered on-site in one full day or virtually in two 3.5-hour sessions. Please explore our ALFSM as seen above to learn more about our customization, flexible durations, and delivery modality options.

Course Modules & Learning Objectives

Setting Ethical Boundaries

-

Apply fairness practices preventing biased or harmful outputs: implement safeguards that promote equity and protect against unintended consequences.

-

Ensure transparency when documenting AI-assisted work: provide clear records that build trust and enable informed evaluation.

-

Maintain oversight to preserve human accountability: establish governance structures that reinforce responsibility and ethical standards.

-

Recognize ethical risks requiring escalation: identify sensitive issues early and route them to appropriate decision-makers for resolution.

Ensuring Fair Outcomes

-

Protect sensitive data through appropriate boundaries: establish clear safeguards that prevent misuse and ensure confidentiality.

-

Follow governance rules guiding compliant AI activity: adhere to standards that reinforce trust and regulatory alignment.

-

Reduce regulatory exposure with safe workflows: design processes that minimize risk and maintain operational integrity.

-

Apply restrictions preventing prohibited AI actions: enforce limits that uphold ethical use and protect against violations.

Preserving Human Oversight

-

Validate outputs to ensure accuracy and reliability: apply systematic checks that confirm correctness and reinforce trust in results.

-

Audit AI content for consistency and traceability: establish review processes that maintain standards and provide clear accountability.

-

Identify hallucinations through structured review: detect and address inaccuracies proactively to safeguard credibility and decision quality.

-

Document decisions clearly when using AI insights: record rationale transparently to strengthen accountability and organizational learning.

Protecting Stakeholder Trust

-

Resolve dilemmas using responsible AI decision models: apply ethical frameworks to guide choices and maintain integrity.

-

Apply governance rules to realistic cases: enforce standards consistently to ensure compliance and build organizational trust.

-

Strengthen workflows using corrective oversight: monitor processes actively to identify gaps and reinforce accountability.

-

Create escalation paths for unsafe outputs: establish clear channels that address risks promptly and safeguard responsible use.

Upon Completion, Participants Will Be Able To

This course is ideal for employees, managers, and teams who rely on AI‑assisted workflows or work with sensitive data and need clear, practical guidance on responsible use. It is especially valuable for organizations scaling AI adoption and establishing consistent norms that protect both people and operations. HR, compliance, legal, and operational leaders will find strong value in the safeguards and decision frameworks built into the course, helping participants use AI confidently while maintaining security, ethics, and organizational trust.

How AMS Can Help

We are uniquely positioned to strengthen capability across the entire organization through a structured, modern training approach grounded in our Adaptive Learning Framework℠ (ALF), and driven by our Structural Sequencing & Governance Diagnostic℠ (SSGD).

With senior-level facilitators, highly customizable courses, and multiple delivery modalities, we design learning experiences that meet participants at every level, from executives to emerging professionals, and accelerate real-world application. The ALF℠ ensures knowledge transfer at each stage, enabling individuals and teams to absorb core concepts, practice new skills, and sustain improved performance over time. This integrated approach deepens capability, enhances alignment, and results in learning that is fully embedded, practical, and built to last.

Join the ranks of leading organizations that have partnered with AMS to drive innovation, improve performance, and achieve sustainable success. Let’s transform together, your journey to excellence starts here.